Gaming's stupid argument about limitless smarts

Ironically, given this is all about intelligence, the games industry's argument about generative AI has become oddly stupid.

On one side are the evangelists, who treat every new model demo as proof that production costs are about to collapse and creativity is about to explode. On the other are the sceptics, who see synthetic assets, chatbot NPCs and AI-written marketing copy and conclude that the whole thing is just a sludge machine for devaluing craft.

Both sides are right about something. Both sides are also missing something.

The strongest criticism of generative AI in games is that it is often being sold as a solution to problems it does not actually solve. A chatbot NPC is not automatically a good character. Infinite dialogue is not the same as good writing. A generated quest is not the same as a designed experience.

Games are not just content containers. They are rule systems, pacing structures, emotional machines and authored spaces. The hard part is rarely producing more stuff. The hard part is producing stuff with meaning, tension, surprise, coherence and consequence.

This is where the anti-hype position has real force. Traditional game AI has already solved many difficult problems by being constrained, legible and designer-controlled. Behaviour trees, utility systems, pathfinding, encounter directors and scripted responses may sound less glamorous than large language models, but they exist because games require control.

Players need to understand why enemies behave as they do. Designers need to tune encounters. Writers need characters to remain consistent. Producers need performance budgets. Legal teams need to know exactly what shipped and who created it.

Generative AI disrupts all of that. It produces output, but output is not design. Worse, it often moves the work rather than removing it. Someone still has to review the asset, edit the text, check the lore, test the behaviour, moderate the abuse cases, verify the rights and decide whether the result is actually any good.

In a studio context, "AI saves time" can quickly become "AI creates a new layer of supervision, compliance and cleanup."

The sceptics are also right about consumer trust. Players may tolerate invisible AI tooling, but obvious generative slop carries reputational risk. AI-generated capsule art, bland item descriptions, synthetic voice lines and filler quests can make a game feel cheap even when the underlying technology is impressive.

In premium PC and console markets especially, audiences often read visible AI use as a signal that the developer cut corners.

But the weakness of the sceptical position is that it can become too defensive. It judges generative AI mainly by whether it improves existing forms – better concept art, better NPCs, faster quests, cheaper localization. That is important, but it may not be where the real change happens.

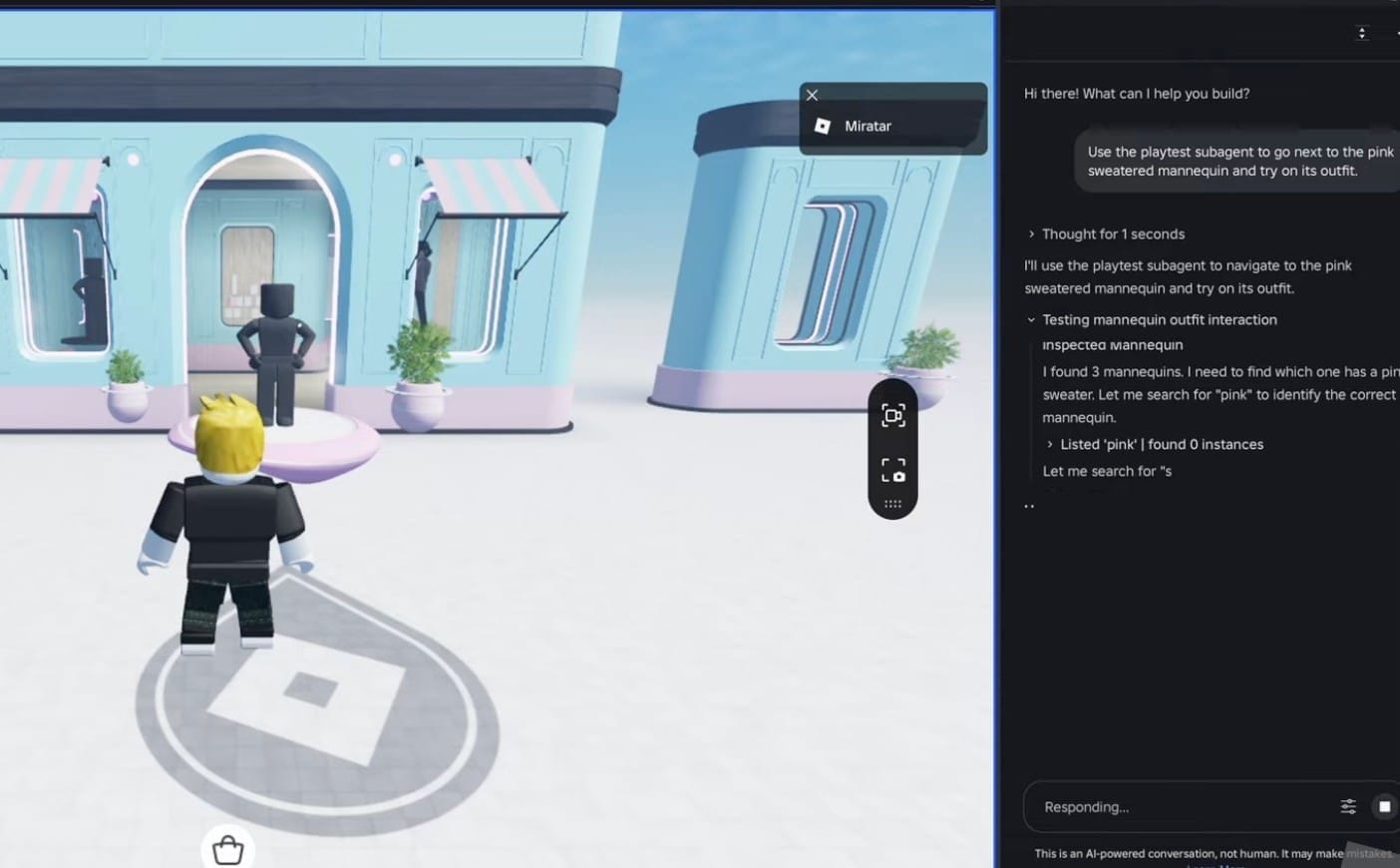

The more significant question is whether generative AI enables new forms of play. Not simply NPCs that talk more, but games built around negotiation, memory, persuasion and improvisation. Not merely generated content, but worlds that respond to the player's history, jokes, failures and style. Not just faster asset production, but player co-creation through natural language, sketches, rules and corrections. Not authored quests, but disposable, improvisational scenarios that behave more like tabletop sessions than fixed content drops.

The most promising area may be agentic systems rather than content generation. AI actors inside game economies could trade, organise, deceive, scout, govern, roleplay, exploit markets or form factions.

That is far more interesting than an AI-written tavern keeper. In onchain games, simulations, strategy worlds and autonomous environments, generative or agentic systems may become part of the game's living structure rather than a cheap content hose.

So the right position is neither enthusiasm nor rejection. Generative AI is a force multiplier. The question is what force it multiplies. In a disciplined studio, it may multiply iteration, prototyping, accessibility, localisation, testing and systemic play. In a lazy one, it will multiply filler, legal risk, blandness and noise.

The industry needs sceptics because the current consensus is intellectually lazy. "AI will transform games" is a prediction, not an argument. But scepticism also has to stay alive to the genuinely new. The critic's job is not to defend old craft boundaries – both labor and content types – forever.

It is to ask whether the new tools are allowing a new type of creator to make new kinds of games or better games, or just more disposable content at marginal cost.

That is the distinction that matters. Generative AI should not be judged by how much it can produce. It should be judged by whether it creates forms of play that were previously impossible, uneconomic or unimaginable. Most of what we are seeing now does not meet that bar. Some of what comes next might.